Audio for Video

This is meant to be a simple and non-technical introduction to the recording of sound for filmmakers. I'm trying to avoid the use of formulas and of physical explanations as much as possible.

Table of contents

- 1. Sampling

- 2. Types of microphones

- 3. Stereophony

- 4. Too Long, Didn't Read

Sampling

The first thing we need to understand is how sampling works. Sampling is the conversion of a continuous function into a series of values. In the case of sound we'll have a microphone that generates a voltage based on the incoming sound waves, and we connect this microphone to some kind of digital recorder.

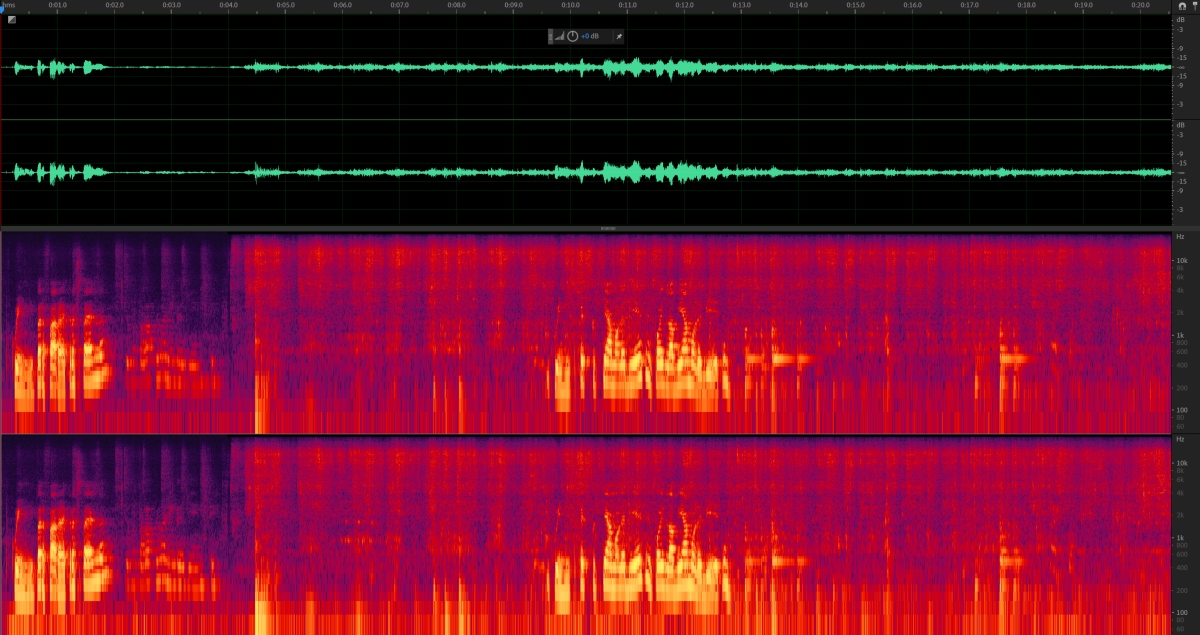

The recorder takes this voltage and records its value several times a second, while decomposing it into frequency components (Fourier transform).

As long as this operation is done often enough, we can perform the inverse transform and perfectly reconstruct the original voltage, and thus the original sound wave.

The human hearing range goes from 20Hz to 20kHz, so the minimum sampling frequency we need (the Nyquist frequency) is just over 40kHz. In practice we can choose between the sampling rate used for audio CDs, 44100Hz, or the one used for video: 48000Hz. My advice is to always use 48kHz, it is possible to resample a file recorded in the wrong sampling rate, but it can lead to timing issues with long recordings.

Higher frequency rates are also possibile, but in most cases they're not worth it.

The other important sampling aspect to consider is bit depth. To simplify, in the figure below the sampling rate determines the distance between samples on the horizontal axis, the bit depth determines their distance on the vertical axis. By distance, of course, I really mean resolution. If we have more bit depth we obtain larger files, but with more dynamic range.

My advice is to choose the highest bit rate allowed by your hardware. It allows you to keep the gain pretty low, so that you avoid clipping, while still having a usable signal. If you can record in 32 bits float you'll have enough dynamic range for pretty much anything.

Microphones

There are a lot of types of microphones out there, but for video work we need to know about just a few of them. We can classify microphones according to their working principle, to their polar pattern and to their form factor.

Working principle

First of all: how do microphones work? There are several common mechanisms (diaphragm, condenser, MEMS, the list goes on), but as a rough generalization we have surface whose movements produce a voltage. The easiest to understand is probably the moving coil microphone, in which a diaphragm is connected to a coil of wire which moves between the poles of a magnet. It's somewhat analogous to a dynamo.

For example, here's the patent for a kind of condenser microphone. In the case of condenser microphones air vibrations move on the two plates of the condenser, thus changing its capacitance. Loudspeakers work with the same general principle, and if you have a separate microphone jack you can connect your headphones to it, and use them as microphones.

Polar patterns

The next characteristic we need to consider is the directivity pattern. That is, if I move a sound source of constant intensity around my microphone, how loud will it sound in the recording? For most microphones that depends on the angle of arrival of the sound.

Here's an explainer page by Shure.

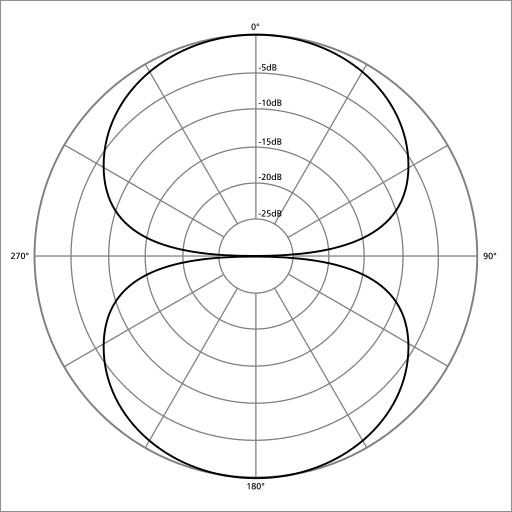

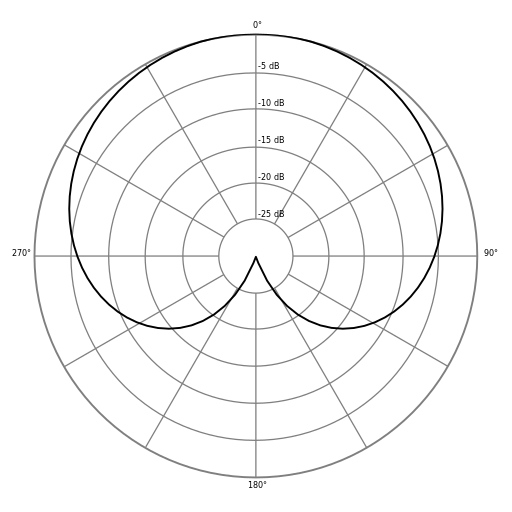

In these figures we are looking at the microphone from the top, and the front of the microphone is towards the top of the page.

Omnidirectional microphones are equally sensitive to sounds coming from any direction.

Figure of eight microphones are sensitive to sounds coming from the front and from the back, but not from the sides.

Cardioid microphones are mostly sensitive to sounds coming from the front, and they reject sounds coming from the back. It's probably the most common pattern.

Lobar microphones are very directive in just one direction. This is the polar pattern of shotgun microphones.

Form factor

Lavalier

Lavalier microphones are usually wore by whoever is speaking in a scene. They can either be omnidirectional or directive, in the latter case we must pay attention to where they're pointing. The most common ones are omnidirectional, in which case we must only take care to avoid handling noise (or simply clothing brushing against them) and direct air movements. The most common mistake is having people speaking directly unto the microphone, which can lead to saturation. As a general guideline, try positioning lavalier microphones at chest height, and try to listen to the result before actually committing to the recording.

Shotguns

Shotguns, or booms, are the most directive kind of microphone generally available to us. They work by subtracting side interferences from an already pretty directive polar pattern. They are lobar microphones.

Handheld microphones

These are most commonly cardioid microphones, as used in TV interviews, or by singers. There are also some side-addressing figure of eight microphones, but they are mostly used in radiophonic studios, so they are unlikely to be used by filmmakers.

Stereo pairs

Microphones can be comined in arrays to produce a stereo image. For video applications they are usually two cardioids (like in the XY technique) or a figure of eight and a cardioid (like in the M+S technique).

Another possibility is to use a pair of binaural microphones, these look just like headphones, and they reproduce the feeling of spatiality of the person performing the recording.

Stereophony

Simply put, we hear with two ears. And most of the time, we listen with headphones, or with stereo speakers, so that using two audio channels has a certain elegance. Even if you record in mono (like with most shotguns), you can pan sounds to a rough approximation of the position of the source on screen, creating a naturalistic correspondence between visual and aural positioning. If you see somebody speaking on the right side of the screen it will feel quite natural to hear them speak somewhat to the right. The public might not notice a central sound, but they will absolutely notice if the sound comes from the wrong side.

To get a feel for how microphone arrays sound, try the MARRS Microphone Array Recording and Reproduction Simulator.

Too Long, Didn't Read

Always record in 48kHz, with the maximum depth bit available (32 bits float), in the least compressed format (WAV, not MP3). Avoid clipping, wind noise, handling noise. Close miking is your friend, but beware the proximity effect. Always have a backup, audio files are small, and recorders are cheap, just keep it rolling, and record on more than one device. Make sure you're not putting everything on one channel, a mono file is better than a bad stereo file.